Print design output

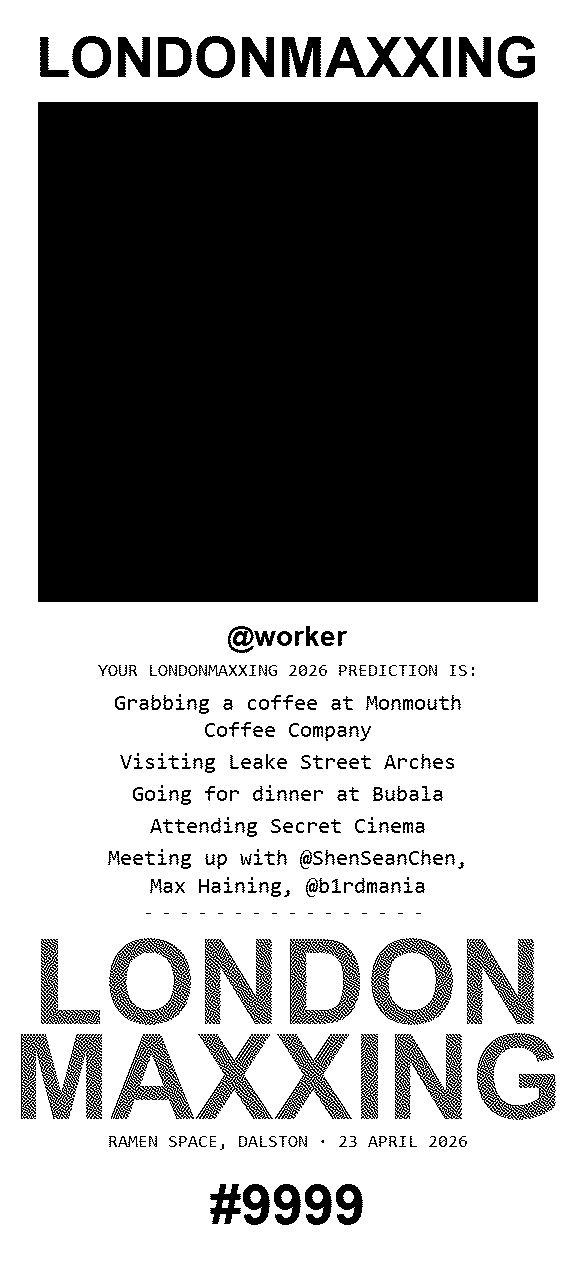

Code that controls the printed receipt, plus a sample render. Rendering runs on the event Mac (Python + PIL + python-escpos). Vercel never touches these files at request time — the Mac pulls the committed code, composes the receipt as a 1-bit PIL image, and sends it to the ZJ-8330 over USB. The sample below was produced by the same pipeline in dry-run mode.

Sample render

worker/out/… → public/design-output-sample.png. Refresh with WORKER_DRY_RUN=1 npm run worker:test then copy the newest PNG from worker/out/.

Receipt layout — worker/print.py

Composes the whole receipt as one 1-bit image: wordmark, dithered selfie, name, prediction lines, halftone footer, QR, serial. Atkinson dither is the 1:1 Python twin of src/lib/dither.ts so booth preview matches print.

#!/usr/bin/env python3

"""

Worker print script. Called by worker/index.ts with the prediction fields

already resolved (server-side random pick at submit time), composites the

whole receipt as a single 1-bit PIL image, then either sends it to the

ZJ-8330 over USB or saves it to worker/out/ (dry run).

Atkinson dither matches src/lib/dither.ts so the booth preview matches

the print.

Hardware (from the PRD):

ZiJiang ZJ-8330 / POS-330

USB VID=0x0416 PID=0x5011, endpoints in_ep=0x81 out_ep=0x03

ESC/POS, 80mm roll, 576px print width @ 203 DPI

Usage:

print.py --photo PATH --serial N --claim-url URL --event-id ID

[--name NAME]

[--cafe CAFE] [--sight SIGHT] [--restaurant REST]

[--event EVENT] [--attendees-json JSON]

Exit 0 on success; non-zero with a human message on stderr.

"""

import argparse

import json

import os

import sys

import textwrap

from datetime import datetime

from pathlib import Path

from PIL import Image, ImageDraw, ImageFont

PRINT_WIDTH_PX = 576

PHOTO_SIZE_PX = 500

WORDMARK_TEXT = "LONDONMAXXING"

WORDMARK_STACKED = ("LONDON", "MAXXING")

VENUE_FOOTER = "RAMEN SPACE, DALSTON"

EVENT_DATE_FOOTER = "23 APRIL 2026"

PREDICTION_HEADER = "YOUR LONDONMAXXING 2026 PREDICTION IS:"

DRY_RUN_DIR = Path(__file__).resolve().parent / "out"

USB_VENDOR = 0x0416

USB_PRODUCT = 0x5011

USB_IN_EP = 0x81

USB_OUT_EP = 0x03

# --- Atkinson dither ---------------------------------------------------

def atkinson(img: Image.Image) -> Image.Image:

gray = img.convert("L")

pixels = bytearray(gray.tobytes())

w, h = gray.size

offsets = [(1, 0), (2, 0), (-1, 1), (0, 1), (1, 1), (0, 2)]

for y in range(h):

for x in range(w):

i = y * w + x

old = pixels[i]

new = 0 if old < 128 else 255

err = (old - new) // 8

pixels[i] = new

for dx, dy in offsets:

nx, ny = x + dx, y + dy

if 0 <= nx < w and 0 <= ny < h:

ni = ny * w + nx

pixels[ni] = max(0, min(255, pixels[ni] + err))

out = Image.frombytes("L", (w, h), bytes(pixels))

return out.convert("1")

# --- Font loading ------------------------------------------------------

_FONT_CANDIDATES_BOLD = [

"/System/Library/Fonts/SFCompactDisplay-Bold.otf",

"/System/Library/Fonts/Helvetica.ttc",

"/System/Library/Fonts/Supplemental/Arial Bold.ttf",

"/usr/share/fonts/truetype/dejavu/DejaVuSans-Bold.ttf",

"/usr/share/fonts/truetype/liberation/LiberationSans-Bold.ttf",

"C:/Windows/Fonts/arialbd.ttf",

"C:/Windows/Fonts/tahomabd.ttf",

"C:/Windows/Fonts/verdanab.ttf",

]

_FONT_CANDIDATES_MONO = [

"/System/Library/Fonts/Menlo.ttc",

"/System/Library/Fonts/Monaco.ttf",

"/usr/share/fonts/truetype/dejavu/DejaVuSansMono.ttf",

"/usr/share/fonts/truetype/liberation/LiberationMono-Regular.ttf",

"C:/Windows/Fonts/consola.ttf",

"C:/Windows/Fonts/cour.ttf",

]

def _load_font(candidates: list[str], size: int) -> ImageFont.FreeTypeFont | ImageFont.ImageFont:

for path in candidates:

if os.path.exists(path):

try:

return ImageFont.truetype(path, size)

except Exception:

continue

return ImageFont.load_default()

# --- Drawing helpers ---------------------------------------------------

def _text_image(text: str, font: ImageFont.FreeTypeFont | ImageFont.ImageFont,

pad_top: int = 4, pad_bottom: int = 4,

align: str = "center") -> Image.Image:

bbox = font.getbbox(text)

text_w = bbox[2] - bbox[0]

text_h = bbox[3] - bbox[1]

canvas = Image.new("L", (PRINT_WIDTH_PX, text_h + pad_top + pad_bottom), 255)

draw = ImageDraw.Draw(canvas)

if align == "center":

x = (PRINT_WIDTH_PX - text_w) // 2 - bbox[0]

elif align == "left":

x = 24 - bbox[0]

else:

x = PRINT_WIDTH_PX - text_w - 24 - bbox[0]

draw.text((x, pad_top - bbox[1]), text, fill=0, font=font)

return canvas.convert("1")

def render_wordmark() -> Image.Image:

font = _load_font(_FONT_CANDIDATES_BOLD, 56)

img = _text_image(WORDMARK_TEXT, font, pad_top=12, pad_bottom=12)

return atkinson(img.convert("L"))

def render_wordmark_stacked() -> Image.Image:

"""Big dithered LONDON / MAXXING for the footer — the brand flourish."""

# Auto-shrink until MAXXING fits inside the print width with some margin.

target_width = PRINT_WIDTH_PX - 24

size = 130

font = _load_font(_FONT_CANDIDATES_BOLD, size)

longest = max(WORDMARK_STACKED, key=len)

while size > 40:

bbox = font.getbbox(longest)

if bbox[2] - bbox[0] <= target_width:

break

size -= 4

font = _load_font(_FONT_CANDIDATES_BOLD, size)

# Render in mid-grey (not pure black) so Atkinson produces the

# halftone dot texture inside each letter rather than solid fill.

ink = 110

parts: list[Image.Image] = []

for text in WORDMARK_STACKED:

bbox = font.getbbox(text)

text_w = bbox[2] - bbox[0]

text_h = bbox[3] - bbox[1]

canvas = Image.new("L", (PRINT_WIDTH_PX, text_h + 8), 255)

draw = ImageDraw.Draw(canvas)

x = (PRINT_WIDTH_PX - text_w) // 2 - bbox[0]

draw.text((x, 4 - bbox[1]), text, fill=ink, font=font)

parts.append(atkinson(canvas))

total_h = sum(p.height for p in parts)

out = Image.new("1", (PRINT_WIDTH_PX, total_h), 1)

y = 0

for p in parts:

out.paste(p, (0, y))

y += p.height

return out

def render_header_line(text: str) -> Image.Image:

font = _load_font(_FONT_CANDIDATES_MONO, 18)

return _text_image(text, font, pad_top=4, pad_bottom=4)

def render_wrapped_line(prefix: str, value: str, size: int = 22) -> Image.Image:

font = _load_font(_FONT_CANDIDATES_MONO, size)

# Prefix on the same logical line as value, wrapped together.

full = f"{prefix} {value}" if prefix else value

max_chars = 30

lines = textwrap.wrap(full, width=max_chars) or [""]

imgs = []

for line in lines:

bbox = font.getbbox(line)

text_h = bbox[3] - bbox[1]

canvas = Image.new("L", (PRINT_WIDTH_PX, text_h + 8), 255)

draw = ImageDraw.Draw(canvas)

text_w = bbox[2] - bbox[0]

x = (PRINT_WIDTH_PX - text_w) // 2 - bbox[0]

draw.text((x, 4 - bbox[1]), line, fill=0, font=font)

imgs.append(canvas.convert("1"))

total_h = sum(i.height for i in imgs)

out = Image.new("1", (PRINT_WIDTH_PX, total_h), 1)

y = 0

for i in imgs:

out.paste(i, (0, y))

y += i.height

return out

def render_separator() -> Image.Image:

return _text_image("- " * 16, _load_font(_FONT_CANDIDATES_MONO, 16))

def render_photo(photo_path: str) -> Image.Image:

with Image.open(photo_path) as src:

src = src.convert("RGB")

w, h = src.size

edge = min(w, h)

box = ((w - edge) // 2, (h - edge) // 2, (w + edge) // 2, (h + edge) // 2)

sq = src.crop(box).resize((PHOTO_SIZE_PX, PHOTO_SIZE_PX), Image.LANCZOS)

dithered = atkinson(sq)

canvas = Image.new("1", (PRINT_WIDTH_PX, PHOTO_SIZE_PX), 1)

canvas.paste(dithered, ((PRINT_WIDTH_PX - PHOTO_SIZE_PX) // 2, 0))

return canvas

def render_qr(url: str) -> Image.Image:

import qrcode

qr = qrcode.QRCode(

version=None,

error_correction=qrcode.constants.ERROR_CORRECT_M,

box_size=7,

border=2,

)

qr.add_data(url)

qr.make(fit=True)

img = qr.make_image(fill_color="black", back_color="white").convert("1")

canvas = Image.new("1", (PRINT_WIDTH_PX, img.height), 1)

canvas.paste(img, ((PRINT_WIDTH_PX - img.width) // 2, 0))

return canvas

def render_serial(serial: int) -> Image.Image:

label = f"#{serial:04d}"

font = _load_font(_FONT_CANDIDATES_BOLD, 56)

return _text_image(label, font, pad_top=16, pad_bottom=4)

def blank(height: int) -> Image.Image:

return Image.new("1", (PRINT_WIDTH_PX, height), 1)

# --- Compose -----------------------------------------------------------

def compose(

photo_path: str,

serial: int,

claim_url: str,

name: str | None,

cafe: str | None,

sight: str | None,

restaurant: str | None,

event_pick: str | None,

attendees: list[str],

) -> Image.Image:

blocks: list[Image.Image] = []

blocks.append(blank(24))

blocks.append(render_wordmark())

blocks.append(blank(12))

blocks.append(render_photo(photo_path))

blocks.append(blank(16))

if name:

header_font = _load_font(_FONT_CANDIDATES_BOLD, 28)

blocks.append(_text_image(name, header_font, pad_top=8, pad_bottom=4))

blocks.append(blank(4))

blocks.append(render_header_line(PREDICTION_HEADER))

blocks.append(blank(10))

body_size = 22

if cafe:

blocks.append(render_wrapped_line("Grabbing a coffee at", cafe, size=body_size))

blocks.append(blank(4))

if sight:

blocks.append(render_wrapped_line("Visiting", sight, size=body_size))

blocks.append(blank(4))

if restaurant:

blocks.append(render_wrapped_line("Going for dinner at", restaurant, size=body_size))

blocks.append(blank(4))

if event_pick:

blocks.append(render_wrapped_line("Attending", event_pick, size=body_size))

blocks.append(blank(4))

if attendees:

blocks.append(render_wrapped_line("Meeting up with", ", ".join(attendees), size=body_size))

blocks.append(blank(8))

blocks.append(render_separator())

blocks.append(blank(12))

blocks.append(render_wordmark_stacked())

blocks.append(blank(8))

blocks.append(render_header_line(f"{VENUE_FOOTER} \u00B7 {EVENT_DATE_FOOTER}"))

blocks.append(blank(14))

blocks.append(render_serial(serial))

blocks.append(blank(36))

total_h = sum(b.height for b in blocks)

canvas = Image.new("1", (PRINT_WIDTH_PX, total_h), 1)

y = 0

for b in blocks:

canvas.paste(b, (0, y))

y += b.height

return canvas

# --- Output ------------------------------------------------------------

def send_usb(img: Image.Image) -> None:

from escpos.printer import Usb

printer = Usb(

USB_VENDOR,

USB_PRODUCT,

in_ep=USB_IN_EP,

out_ep=USB_OUT_EP,

timeout=0,

)

try:

printer.image(img, impl="bitImageRaster")

printer.cut()

finally:

try:

printer.close()

except Exception:

pass

def save_dry_run(img: Image.Image, serial: int, event_id: str) -> Path:

DRY_RUN_DIR.mkdir(parents=True, exist_ok=True)

ts = datetime.now().strftime("%H%M%S")

path = DRY_RUN_DIR / f"{event_id}-{serial:04d}-{ts}.png"

img.save(path)

return path

# --- Main --------------------------------------------------------------

def main() -> int:

parser = argparse.ArgumentParser()

parser.add_argument("--photo", required=True)

parser.add_argument("--serial", required=True, type=int)

parser.add_argument("--claim-url", required=True)

parser.add_argument("--event-id", required=True)

parser.add_argument("--name", default=None)

parser.add_argument("--cafe", default=None)

parser.add_argument("--sight", default=None)

parser.add_argument("--restaurant", default=None)

parser.add_argument("--event", dest="event_pick", default=None)

parser.add_argument("--attendees-json", default=None)

args = parser.parse_args()

attendees: list[str] = []

if args.attendees_json:

try:

attendees = list(json.loads(args.attendees_json))

except Exception as e:

print(f"--attendees-json parse failed: {e}", file=sys.stderr)

return 1

img = compose(

photo_path=args.photo,

serial=args.serial,

claim_url=args.claim_url,

name=args.name,

cafe=args.cafe,

sight=args.sight,

restaurant=args.restaurant,

event_pick=args.event_pick,

attendees=attendees,

)

if os.environ.get("WORKER_DRY_RUN") == "1":

path = save_dry_run(img, args.serial, args.event_id)

print(f"[print] dry run — receipt saved to {path}")

return 0

try:

send_usb(img)

except Exception as e:

print(f"USB print failed: {e}", file=sys.stderr)

return 1

return 0

if __name__ == "__main__":

sys.exit(main())

Dither (client) — src/lib/dither.ts

JavaScript port of the same Atkinson algorithm. Used on the booth page for the live selfie preview so what the guest sees is what prints.

// Atkinson dithering — 1/8 diffusion across 6 neighbours, 1/8 lost.

// Gives the distinctive Mac Classic / thermal-receipt look.

// Client-side twin of the Python version in the worker (slice 5).

const OFFSETS: Array<[number, number]> = [

[1, 0],

[2, 0],

[-1, 1],

[0, 1],

[1, 1],

[0, 2],

];

export function atkinson(imageData: ImageData): ImageData {

const { width, height, data } = imageData;

const out = new Uint8ClampedArray(data);

// Convert to grayscale in place, using luminance weighting.

for (let i = 0; i < out.length; i += 4) {

const gray = 0.299 * out[i] + 0.587 * out[i + 1] + 0.114 * out[i + 2];

out[i] = out[i + 1] = out[i + 2] = gray;

}

for (let y = 0; y < height; y++) {

for (let x = 0; x < width; x++) {

const idx = (y * width + x) * 4;

const old = out[idx];

const next = old < 128 ? 0 : 255;

const err = (old - next) / 8;

out[idx] = out[idx + 1] = out[idx + 2] = next;

for (const [dx, dy] of OFFSETS) {

const nx = x + dx;

const ny = y + dy;

if (nx < 0 || nx >= width || ny < 0 || ny >= height) continue;

const nidx = (ny * width + nx) * 4;

out[nidx] = Math.max(0, Math.min(255, out[nidx] + err));

out[nidx + 1] = out[nidx];

out[nidx + 2] = out[nidx];

}

}

}

return new ImageData(out, width, height);

}

Booth page flow — src/app/booth/[eventId]/booth-client.tsx

The web-side UX: capture → step1 (selfie + name + Generate) → generating loader → queued with live prediction preview. This is the *frame* guests see; what prints is defined by print.py above.

"use client";

import { useCallback, useEffect, useRef, useState } from "react";

import { Turnstile, type TurnstileInstance } from "@marsidev/react-turnstile";

type Event = {

id: string;

name: string;

location: string;

startAt: string;

endAt: string;

};

type Prediction = {

cafe: string | null;

sight: string | null;

restaurant: string | null;

event: string | null;

attendees: string[];

};

type Phase =

| { kind: "loading" }

| { kind: "closed-pre"; startAt: string }

| { kind: "closed-post" }

| { kind: "paused" }

| { kind: "capture" }

| { kind: "step1"; blob: Blob; dataUrl: string }

| { kind: "generating" }

| {

kind: "queued";

jobId: string;

serialNumber: number;

queuePosition: number;

estimatedSeconds: number;

jobStatus: string;

prediction: Prediction;

name: string;

}

| { kind: "error"; message: string; blob?: Blob; dataUrl?: string };

const OUTPUT_MAX_EDGE = 1024;

export function BoothClient({

event,

turnstileSiteKey,

}: {

event: Event;

turnstileSiteKey: string | null;

}) {

const [phase, setPhase] = useState<Phase>({ kind: "loading" });

const [printerOnline, setPrinterOnline] = useState(true);

const [facingMode, setFacingMode] = useState<"user" | "environment">("user");

const [name, setName] = useState("");

const [turnstileToken, setTurnstileToken] = useState<string | null>(null);

const videoRef = useRef<HTMLVideoElement | null>(null);

const streamRef = useRef<MediaStream | null>(null);

const turnstileRef = useRef<TurnstileInstance | null>(null);

// --- Poll booth status (event window + printer + boothActive) -----

useEffect(() => {

let cancelled = false;

async function check() {

try {

const res = await fetch(`/api/booth/status?eventId=${event.id}`);

if (!res.ok) throw new Error("status fetch failed");

const data = (await res.json()) as {

boothActive: boolean;

printerOnline: boolean;

startAt: string;

endAt: string;

};

if (cancelled) return;

setPrinterOnline(data.printerOnline);

setPhase((cur) => {

const transitionable =

cur.kind === "loading" ||

cur.kind === "closed-pre" ||

cur.kind === "closed-post" ||

cur.kind === "paused";

if (!transitionable) return cur;

if (data.boothActive) return { kind: "capture" };

const now = Date.now();

if (now < new Date(data.startAt).getTime()) {

return { kind: "closed-pre", startAt: data.startAt };

}

if (now > new Date(data.endAt).getTime()) {

return { kind: "closed-post" };

}

return { kind: "paused" };

});

} catch {

if (!cancelled)

setPhase({ kind: "error", message: "Can't reach the booth right now." });

}

}

check();

const int = setInterval(check, 10_000);

return () => {

cancelled = true;

clearInterval(int);

};

}, [event.id]);

// --- Acquire camera when in capture phase -------------------------

useEffect(() => {

if (phase.kind !== "capture") return;

let active = true;

(async () => {

try {

streamRef.current?.getTracks().forEach((t) => t.stop());

const stream = await navigator.mediaDevices.getUserMedia({

video: { facingMode, width: { ideal: 1280 }, height: { ideal: 1280 } },

audio: false,

});

if (!active) {

stream.getTracks().forEach((t) => t.stop());

return;

}

streamRef.current = stream;

if (videoRef.current) {

videoRef.current.srcObject = stream;

await videoRef.current.play().catch(() => {});

}

} catch {

// getUserMedia denied — upload fallback stays available.

}

})();

return () => {

active = false;

streamRef.current?.getTracks().forEach((t) => t.stop());

streamRef.current = null;

};

}, [phase.kind, facingMode]);

// --- Capture selfie ----------------------------------------------

const capture = useCallback(async () => {

const video = videoRef.current;

if (!video || !video.videoWidth) return;

const vw = video.videoWidth;

const vh = video.videoHeight;

const size = Math.min(vw, vh);

const targetEdge = Math.min(size, OUTPUT_MAX_EDGE);

const canvas = document.createElement("canvas");

canvas.width = targetEdge;

canvas.height = targetEdge;

const ctx = canvas.getContext("2d");

if (!ctx) return;

const sx = (vw - size) / 2;

const sy = (vh - size) / 2;

// Un-mirror the front camera preview so the saved image reads correctly.

if (facingMode === "user") {

ctx.translate(targetEdge, 0);

ctx.scale(-1, 1);

}

ctx.drawImage(video, sx, sy, size, size, 0, 0, targetEdge, targetEdge);

canvas.toBlob(

(blob) => {

if (!blob) return;

const dataUrl = canvas.toDataURL("image/jpeg", 0.9);

setPhase({ kind: "step1", blob, dataUrl });

},

"image/jpeg",

0.9,

);

}, [facingMode]);

// --- Upload fallback (re-encode through canvas) ------------------

const onUpload = useCallback((file: File) => {

const reader = new FileReader();

reader.onload = () => {

const dataUrl = reader.result as string;

const img = new Image();

img.onload = () => {

const srcSize = Math.min(img.naturalWidth, img.naturalHeight);

const size = Math.min(srcSize, OUTPUT_MAX_EDGE);

const canvas = document.createElement("canvas");

canvas.width = size;

canvas.height = size;

const ctx = canvas.getContext("2d");

if (!ctx) return;

ctx.drawImage(

img,

(img.naturalWidth - srcSize) / 2,

(img.naturalHeight - srcSize) / 2,

srcSize,

srcSize,

0,

0,

size,

size,

);

canvas.toBlob(

(blob) => {

if (!blob) return;

setPhase({

kind: "step1",

blob,

dataUrl: canvas.toDataURL("image/jpeg", 0.9),

});

},

"image/jpeg",

0.9,

);

};

img.src = dataUrl;

};

reader.readAsDataURL(file);

}, []);

// --- Generate (submit) -------------------------------------------

const generate = useCallback(async () => {

if (phase.kind !== "step1") return;

if (!name.trim()) return;

const blob = phase.blob;

const dataUrl = phase.dataUrl;

setPhase({ kind: "generating" });

// Refresh Turnstile right before submit (tokens expire ~300s).

let token = turnstileToken;

if (turnstileSiteKey) {

try {

turnstileRef.current?.reset();

const fresh = await turnstileRef.current?.getResponsePromise(10_000);

if (fresh) token = fresh;

} catch {}

if (!token) {

setPhase({

kind: "error",

message: "Human check expired. Tap Try again to re-run it.",

blob,

dataUrl,

});

return;

}

}

const form = new FormData();

form.append("photo", blob, "selfie.jpg");

form.append("eventId", event.id);

form.append("handle", name.trim());

if (token) form.append("turnstileToken", token);

try {

const res = await fetch("/api/submit", { method: "POST", body: form });

const data = await res.json();

if (!res.ok) {

setPhase({ kind: "error", message: data.error ?? "Submit failed.", blob, dataUrl });

return;

}

setPhase({

kind: "queued",

jobId: data.jobId,

serialNumber: data.serialNumber,

queuePosition: data.queuePosition,

estimatedSeconds: data.estimatedSeconds,

jobStatus: "pending",

prediction: data.prediction,

name: name.trim(),

});

} catch {

setPhase({ kind: "error", message: "Network error. Try again.", blob, dataUrl });

}

}, [phase, name, turnstileToken, turnstileSiteKey, event.id]);

// --- Poll job status once queued ---------------------------------

const queuedJobId = phase.kind === "queued" ? phase.jobId : null;

useEffect(() => {

if (!queuedJobId) return;

let cancelled = false;

const poll = async () => {

try {

const res = await fetch(`/api/booth/job/${queuedJobId}`);

if (!res.ok) return;

const data = await res.json();

if (cancelled) return;

setPhase((cur) =>

cur.kind === "queued"

? {

...cur,

jobStatus: data.status,

queuePosition: data.queuePosition ?? cur.queuePosition,

estimatedSeconds: data.estimatedSeconds ?? cur.estimatedSeconds,

}

: cur,

);

} catch {}

};

const int = setInterval(poll, 3000);

return () => {

cancelled = true;

clearInterval(int);

};

}, [queuedJobId]);

// --- render ------------------------------------------------------

if (phase.kind === "loading") return <Frame>Loading booth…</Frame>;

if (phase.kind === "closed-pre") {

const when = new Date(phase.startAt).toLocaleString("en-GB", {

hour: "2-digit",

minute: "2-digit",

day: "2-digit",

month: "short",

});

return (

<Frame>

<H>Doors open at {when}</H>

<P>Come back then. The booth goes live when the event starts.</P>

</Frame>

);

}

if (phase.kind === "closed-post") {

return (

<Frame>

<H>Meetup 001 has ended.</H>

<P>Thanks for being here. If you got a receipt, scan its QR to claim your stamp.</P>

</Frame>

);

}

if (phase.kind === "paused") {

return (

<Frame>

<H>Booth paused.</H>

<P>We're on a quick break — this page will refresh automatically when it's back up.</P>

</Frame>

);

}

if (phase.kind === "error") {

const backToStep1 = phase.blob && phase.dataUrl;

return (

<Frame>

<H>Something broke.</H>

<P>{phase.message}</P>

<button

className={btnClass}

onClick={() => {

turnstileRef.current?.reset();

setTurnstileToken(null);

if (backToStep1) {

setPhase({ kind: "step1", blob: phase.blob!, dataUrl: phase.dataUrl! });

} else {

setPhase({ kind: "capture" });

}

}}

>

Try again

</button>

</Frame>

);

}

if (phase.kind === "generating") {

return (

<Frame>

<H>Collecting positive vibes & Londonmaxxing energy…</H>

<P>Generating your 2026 prediction. This takes a few seconds.</P>

</Frame>

);

}

if (phase.kind === "queued") {

return (

<Frame>

<H>#{String(phase.serialNumber).padStart(4, "0")} is yours</H>

<P>

{phase.jobStatus === "pending" && phase.queuePosition > 0 && (

<>You're #{phase.queuePosition} in queue, ~{phase.estimatedSeconds}s.</>

)}

{phase.jobStatus === "printing" && <>Printing now — walk to the booth.</>}

{phase.jobStatus === "printed" && <>Printed. Grab your receipt from the booth.</>}

{phase.jobStatus === "failed" && <>Something went wrong. Ask staff.</>}

</P>

{!printerOnline && phase.jobStatus === "pending" && (

<P className="text-orange-500">

Printer looks offline — prints may be delayed. You're still in the queue.

</P>

)}

<div className="mt-6 border-t border-foreground/20 pt-4 text-xs tracking-tight opacity-80">

<div className="font-semibold">Your Londonmaxxing 2026 prediction</div>

<ul className="mt-2 space-y-1">

{phase.prediction.cafe && <li>Grabbing a coffee at {phase.prediction.cafe}</li>}

{phase.prediction.sight && <li>Visiting {phase.prediction.sight}</li>}

{phase.prediction.restaurant && <li>Going for dinner at {phase.prediction.restaurant}</li>}

{phase.prediction.event && <li>Attending {phase.prediction.event}</li>}

{phase.prediction.attendees.length > 0 && (

<li>Meeting up with {phase.prediction.attendees.join(", ")}</li>

)}

</ul>

</div>

</Frame>

);

}

if (phase.kind === "capture") {

return (

<Frame>

<H>Snap a selfie</H>

{!printerOnline && (

<P className="text-orange-500">Printer offline — prints may be delayed.</P>

)}

<div className="relative mt-4 aspect-square w-full overflow-hidden border border-foreground/20 bg-black">

<video

ref={videoRef}

playsInline

muted

autoPlay

className="h-full w-full object-cover"

style={{ transform: facingMode === "user" ? "scaleX(-1)" : "none" }}

/>

<div className="pointer-events-none absolute inset-4 border border-white/60" />

</div>

<div className="mt-3 flex gap-2">

<button

className={btnClass}

onClick={() => setFacingMode((m) => (m === "user" ? "environment" : "user"))}

>

Flip

</button>

<button className={btnPrimary} onClick={capture}>

Capture

</button>

<label className={btnClass}>

Upload

<input

type="file"

accept="image/*"

capture="user"

className="hidden"

onChange={(e) => {

const f = e.target.files?.[0];

if (f) onUpload(f);

}}

/>

</label>

</div>

</Frame>

);

}

// step1 — enter details

return (

<Frame>

<H>LONDONMAXXING</H>

<P>Enter your name, generate your 2026 prediction.</P>

<label className="mt-6 block text-xs uppercase tracking-tight">Name / handle</label>

<input

type="text"

value={name}

onChange={(e) => setName(e.target.value)}

maxLength={60}

autoComplete="off"

className="mt-1 w-full border border-foreground/20 bg-transparent p-2 font-mono text-sm"

placeholder="@you or Your Name"

autoFocus

/>

<label className="mt-4 block text-xs uppercase tracking-tight">Your selfie</label>

<div className="mt-1 aspect-square w-full max-w-xs overflow-hidden border border-foreground/20 bg-black/5">

{/* eslint-disable-next-line @next/next/no-img-element */}

<img src={phase.dataUrl} alt="selfie" className="h-full w-full object-cover" />

</div>

<button

className={`${btnClass} mt-2 w-max`}

onClick={() => setPhase({ kind: "capture" })}

>

Retake

</button>

{turnstileSiteKey && (

<div className="mt-4">

<Turnstile

ref={turnstileRef}

siteKey={turnstileSiteKey}

onSuccess={setTurnstileToken}

onError={() => setTurnstileToken(null)}

onExpire={() => setTurnstileToken(null)}

options={{ theme: "auto", refreshExpired: "auto" }}

/>

</div>

)}

<button

onClick={generate}

disabled={!name.trim() || (turnstileSiteKey !== null && !turnstileToken)}

className="mt-6 inline-flex w-full cursor-pointer items-center justify-center border border-foreground bg-foreground px-4 py-3 text-sm font-mono tracking-tight uppercase text-background hover:opacity-90 disabled:opacity-40"

>

Generate

</button>

</Frame>

);

}

function Frame({ children }: { children: React.ReactNode }) {

return (

<div className="flex min-h-screen flex-col font-mono">

<div className="mx-auto flex w-full max-w-md flex-1 flex-col px-5 py-8 md:max-w-lg">

{children}

</div>

</div>

);

}

function H({ children }: { children: React.ReactNode }) {

return (

<h1 className="text-xl leading-tight tracking-tight uppercase md:text-2xl">

{children}

</h1>

);

}

function P({ children, className }: { children: React.ReactNode; className?: string }) {

return (

<p className={`mt-3 text-sm leading-snug tracking-tight ${className ?? ""}`}>

{children}

</p>

);

}

const btnClass =

"inline-flex cursor-pointer items-center justify-center border border-foreground/20 bg-transparent px-4 py-2 text-sm font-mono tracking-tight hover:bg-foreground/5";

const btnPrimary =

"inline-flex flex-1 cursor-pointer items-center justify-center border border-foreground bg-foreground px-4 py-2 text-sm font-mono tracking-tight text-background hover:opacity-90 disabled:opacity-40";